Resources

About Us

AI Chip Market by Chip Type (GPU, CPU, ASIC, TPU, FPGA, NPU), Function (Training, Inference), Processing Type (Cloud/Data Center, Edge), Application (Data Centers, Autonomous Vehicles, Consumer Electronics, Industrial IoT, Healthcare), and End-use (Data Centers & Cloud, Automotive, Consumer Electronics, Industrial, Healthcare, Telecommunications) - Global Forecast to 2036

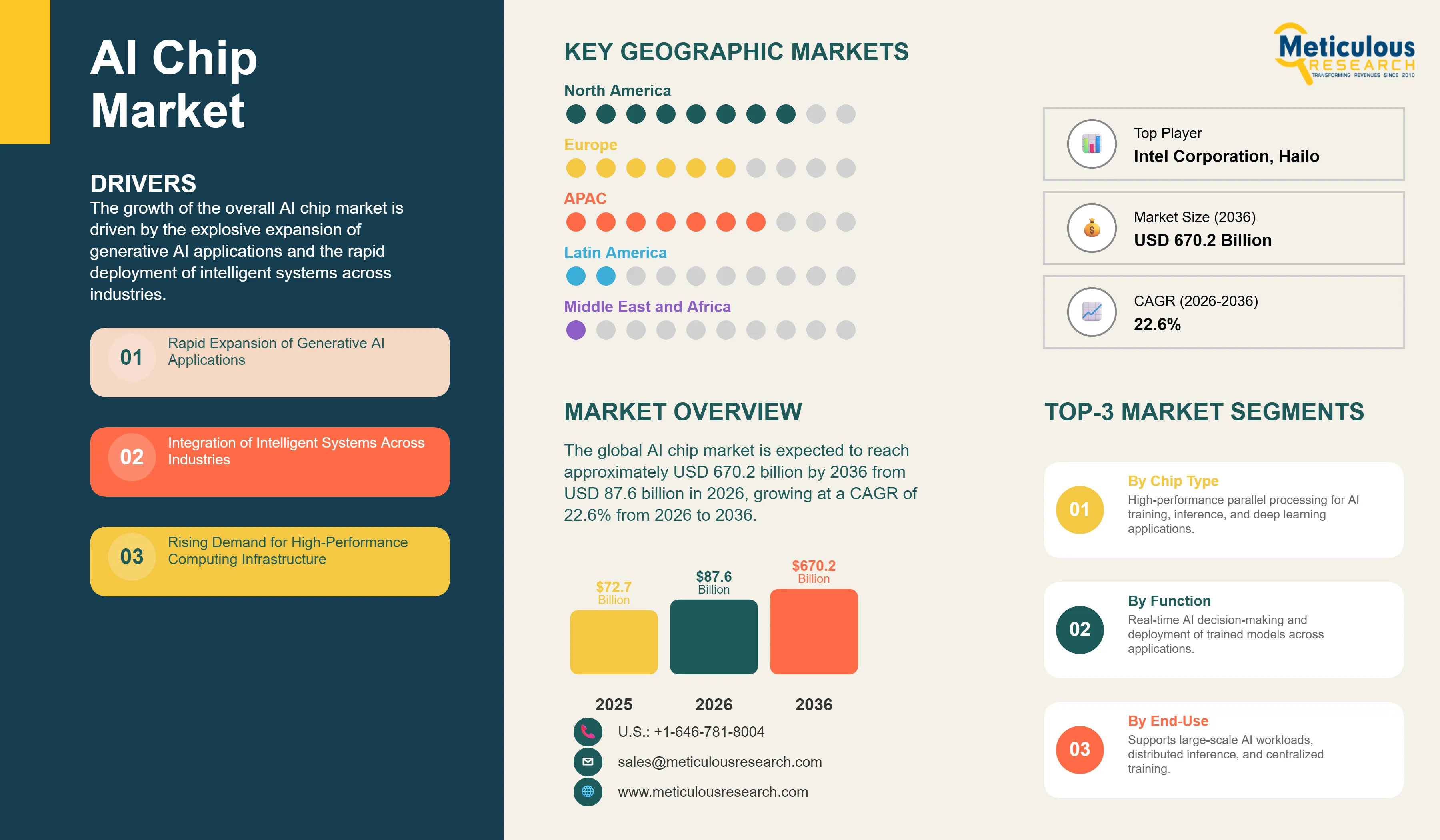

Report ID: MRSE - 1041761 Pages: 298 Feb-2026 Formats*: PDF Category: Semiconductor and Electronics Delivery: 24 to 72 Hours Download Free Sample ReportThe global AI chip market was valued at USD 72.7 billion in 2025. The market is expected to reach approximately USD 670.2 billion by 2036 from USD 87.6 billion in 2026, growing at a CAGR of 22.6% from 2026 to 2036. The growth of the overall AI chip market is driven by the explosive expansion of generative AI applications and the rapid deployment of intelligent systems across industries. As organizations seek to integrate more sophisticated computational power into large language models, autonomous systems, and real-time inference networks, AI chips have become essential for maintaining high-performance processing and operational reliability. The rapid expansion of edge computing initiatives and the increasing need for specialized hardware in data centers and autonomous vehicles continue to fuel significant growth of this market across all major geographic regions.

Click here to: Get Free Sample Pages of this Report

Click here to: Get Free Sample Pages of this Report

AI chips are specialized semiconductor processors that leverage advanced architectures to provide optimized parallel processing and improved computational efficiency through dedicated hardware acceleration. These processors include integrated graphics processing units, tensor processing units, application-specific integrated circuits, and neural processing units designed to accelerate machine learning workloads and enhance system performance across the device lifecycle. The market is defined by high-efficiency technologies such as transformer-optimized architectures and custom silicon designs, which significantly enhance training speed and inference precision in high-demand computing environments. These processors are indispensable for organizations seeking to optimize their AI operations and meet aggressive performance and cost-efficiency targets.

The market includes a diverse range of solutions, ranging from general-purpose GPUs for research applications to specialized ASICs and custom accelerators for production deployments. These chips are increasingly integrated with advanced components such as high-bandwidth memory and interconnect technologies to provide capabilities such as real-time inference processing and distributed training of large-scale neural networks. The ability to provide stable, high-precision computation while minimizing power consumption has made AI chip technology the choice for institutions where computational accuracy and operational efficiency are paramount.

The global technology sector is pushing hard to modernize computing infrastructure, aiming to meet ambitious AI deployment targets and next-generation application requirements. This drive has increased the adoption of specialized semiconductor solutions, with advanced AI chips helping to enable breakthrough capabilities for generative AI, autonomous systems, and intelligent automation. At the same time, the rapid growth in edge computing and physical AI applications is increasing the need for energy-efficient, low-latency processing solutions.

Proliferation of Generative AI and Custom Silicon Integration

Technology leaders across the industry are rapidly shifting to specialized AI accelerators, moving well beyond traditional general-purpose computing setups. NVIDIA's latest Blackwell and Rubin platforms deliver significantly higher training throughput, while custom ASICs from hyperscalers like Google, Amazon, and Microsoft have slashed operational costs in global data center deployments. The real game-changer comes with domain-specific architectures featuring integrated high-bandwidth memory that maintains peak operational efficiency even in high-volume inference environments. These advancements make large-scale model deployment practical and cost-effective for everyone from small-scale startups to global technology giants chasing excellence in AI capabilities and lower operational costs.

Innovation in Edge Computing and Autonomous System Deployment

Innovation in edge AI processors and physical AI systems is rapidly driving the AI chip market, as inference operations become more distributed and applications more autonomous. Equipment suppliers like Qualcomm and NVIDIA are now designing chips that combine the speed of real-time processing with the intelligence of on-device inference in a single platform, saving valuable power consumption and simplifying deployment logistics. These systems often involve advanced neural processing units and specialized accelerators capable of handling complex vision and decision-making workloads without compromising safety or operational reliability.

At the same time, growing focus on energy efficiency is pushing manufacturers to develop AI chip solutions tailored to low-power operation and sustainable computing principles. These systems help reduce environmental impact through the use of advanced process nodes and power-optimized architectures. By combining high-density computational performance with robust energy efficiency, these new designs support both technological advancement and corporate sustainability, strengthening the resilience of the broader semiconductor value chain.

|

Parameter |

Details |

|

Market Size by 2036 |

USD 670.2 Billion |

|

Market Size in 2026 |

USD 87.6 Billion |

|

Market Size in 2025 |

USD 72.7 Billion |

|

Market Growth Rate (2026-2036) |

CAGR of 12.2% |

|

Dominating Region |

North America |

|

Fastest Growing Region |

Asia-Pacific |

|

Base Year |

2025 |

|

Forecast Period |

2026 to 2036 |

|

Segments Covered |

Chip Type, Function, Processing Type, Application, End-use, and Region |

|

Regions Covered |

North America, Europe, Asia-Pacific, Latin America, and Middle East & Africa |

Drivers: Digital Transformation and AI Infrastructure Expansion

A key driver of the AI chip market is the rapid movement of the global technology industry toward AI-native computing models. Global demand for accelerated training capabilities, real-time inference processing, and scalable AI deployment has created significant incentives for the adoption of specialized chip infrastructure. The trend toward generative AI and the integration of intelligent systems into unified platforms drive manufacturers toward scalable solutions that specialized AI chips can uniquely provide. It is estimated that as enterprise adoption of AI applications rises and compute requirements become more demanding through 2036, the need for robust, high-performance infrastructure increases significantly; therefore, advanced GPU architectures and custom accelerators, with their ability to ensure efficient parallel processing, are considered a crucial enabler of modern AI delivery strategies.

Opportunity: Edge AI Proliferation and Autonomous System Integration

The rapid growth of the edge computing market and autonomous technologies provides great opportunities for the AI chip market. Indeed, the global surge in physical AI deployment and intelligent robotics has created a compelling demand for systems that can handle local processing and provide ultra-low latency for critical decision-making. These applications require high reliability, power efficiency, and the ability to handle diverse AI workloads, all attributes that are met with advanced edge AI solutions. The autonomous vehicle market is set to expand significantly through 2036, with specialized AI chips poised for an expanding share as manufacturers seek to maximize safety and minimize operational complexity. Furthermore, the increasing demand for on-device inference and privacy-preserving computation is stimulating demand for distributed AI solutions that provide high-speed processing and operational independence.

Why Does the GPU Segment Lead the Market?

The GPU segment accounts for a significant portion of the overall AI chip market in 2026. This is mainly attributed to the versatile use of this technology in supporting large-scale training projects, high-throughput inference requirements, and complex deep learning applications within modern data center environments. These processors offer the most comprehensive way to ensure parallel processing across diverse AI workloads. The data center and research sectors alone consume a large share of GPUs, with major deployments in North America and Asia-Pacific demonstrating the technology's capability to handle high-density computational requirements. However, the ASIC segment is expected to grow at a rapid CAGR during the forecast period, driven by the growing need for custom silicon implementations, energy-efficient operations, and technical specialization in domain-specific AI transformations.

How Does the Inference Segment Dominate?

Based on function, the inference segment holds the largest share of the overall market in 2026. This is primarily due to the massive volume of production AI deployments and the rigorous performance standards required for real-time decision-making. Current large-scale inference systems are increasingly specifying specialized hardware platforms to ensure compliance with global latency standards and user expectations for responsive AI environments.

The training segment is expected to witness steady growth during the forecast period. The continued development of foundation models and the complexity of next-generation AI architectures are driving the requirement for advanced training systems that can handle massive parameter counts and extended training cycles while ensuring absolute reliability for research-critical computations.

Why Does the Data Centers & Cloud Segment Lead the Market?

The data centers and cloud segment commands the largest share of the global AI chip market in 2026. This dominance stems from its superior ability to support large-scale AI workloads, centralized model training, and distributed inference operations, making it the end-use of choice for high-performance AI processing. Large-scale deployments in hyperscale facilities, enterprise data centers, and cloud service providers drive demand, with advanced systems from providers like NVIDIA, AMD, and Intel enabling reliable performance in complex computing environments.

However, the automotive segment is poised for rapid growth through 2036, fueled by expanding applications in autonomous driving systems and advanced driver assistance technologies. Automotive manufacturers face mounting pressure to integrate sophisticated AI capabilities for safety-critical applications, where specialized AI chips provide essential processing power for real-time perception and decision-making.

How is North America Maintaining Dominance in the Global AI Chip Market?

North America holds the largest share of the global AI chip market in 2026. The largest share of this region is primarily attributed to the massive investments in AI infrastructure and the presence of the world's leading technology companies, particularly in the United States. The U.S. alone accounts for a significant portion of global AI chip deployment, with its position as a leading adopter of generative AI technology and advanced computing infrastructure driving sustained growth. The presence of leading manufacturers like NVIDIA, Intel, and AMD, along with hyperscale cloud providers and a well-developed semiconductor ecosystem, provides a robust market for both standard and high-performance AI solutions.

Which Factors Support Asia-Pacific and Europe Market Growth?

Asia-Pacific and Europe together account for a substantial share of the global AI chip market. The growth of these markets is mainly driven by the need for technological advancement in the manufacturing and consumer electronics sectors. The demand for AI chips in Asia-Pacific is mainly due to its massive semiconductor fabrication capacity and the presence of manufacturers like TSMC, Samsung, and leading technology companies in China, South Korea, and Japan.

In Europe, the leadership in automotive innovation and the push for digital sovereignty are driving the adoption of specialized AI solutions. Countries like Germany, France, and the UK are at the forefront, with significant focus on integrating AI capabilities into automotive systems and industrial automation to ensure the highest levels of performance and competitiveness.

The companies such as NVIDIA Corporation, Intel Corporation, Advanced Micro Devices Inc. (AMD), and Qualcomm Technologies Inc. lead the global AI chip market with a comprehensive range of high-performance computing solutions, particularly for large-scale data center applications and edge deployments. Meanwhile, players including Google LLC (Tensor Processing Units), Apple Inc. (Neural Engine), Microsoft Corporation (Maia), and Amazon Web Services (Trainium, Inferentia) focus on custom silicon infrastructure and integrated cloud platforms targeting the hyperscale computing and enterprise sectors. Emerging manufacturers and specialized players such as Cerebras Systems, Groq Inc., Tenstorrent, SambaNova Systems, Graphcore, and Hailo are strengthening the market through innovations in wafer-scale computing, inference acceleration, and edge AI platforms.

The global AI chip market is expected to grow from USD 87.6 billion in 2026 to USD 670.2 billion by 2036.

The global AI chip market is projected to grow at a CAGR of 22.6% from 2026 to 2036.

GPUs are expected to dominate the market in 2026 due to their superior ability to support large-scale training workloads and high-throughput inference operations. However, the ASIC segment is projected to be the fastest-growing segment owing to the increasing need for custom silicon implementations and energy-efficient specialized processing in complex AI environments.

Generative AI and edge computing are transforming the AI chip landscape by demanding higher computational density, lower power consumption, and improved real-time processing capabilities. These technologies drive the adoption of advanced platforms like transformer-optimized architectures and distributed inference systems, enabling manufacturers to support the complex workflows and low-latency requirements of next-generation AI applications.

North America holds the largest share of the global AI chip market in 2026. The largest share of this region is primarily attributed to the massive investments in AI infrastructure and the presence of leading technology companies and hyperscale cloud providers.

The leading companies include NVIDIA Corporation, Intel Corporation, Advanced Micro Devices Inc., Qualcomm Technologies Inc., Google LLC, Apple Inc., Microsoft Corporation, and Amazon Web Services.

Published Date: Feb-2026

Published Date: Feb-2026

Published Date: Apr-2026

Published Date: Sep-2020

Please enter your corporate email id here to view sample report.

Subscribe to get the latest industry updates